Explore using Frog for CPD... Professional Development Platform

Life After Levels is a collaboration founded by the NAHT and Frog Education

It exists to help schools take best advantage of the opportunity that’s been presented by the removal of National Attainment Levels. This is our time to define the future of assessment.

The NAHT assessment principles are not just about a new method, it’s a totally new approach to assessment, with teaching and learning at its heart. These principles have now proved themselves very successfully, improving outcomes, raising teaching standards and reducing teacher workload; and in many cases reminding teachers why they joined the profession.

Many schools have received an unwelcome surprise following their SATs results and it has become clear that a new approach is now necessary, both in primary and secondary schools.

Curriculums and Exemplar Standards from our Partners

Our collaborators have developed curriculums with exemplar standards which are included in the Frog Curriculum Designer platform. Our partners include...

View all available Curriculums and Exemplar Standards...

FrogProgress

A centralised assessment and progress reporting system developed from the ground up in close partnership with the NAHT, NAACE and leading schools across the UK.

MIS LINKED CLASSES

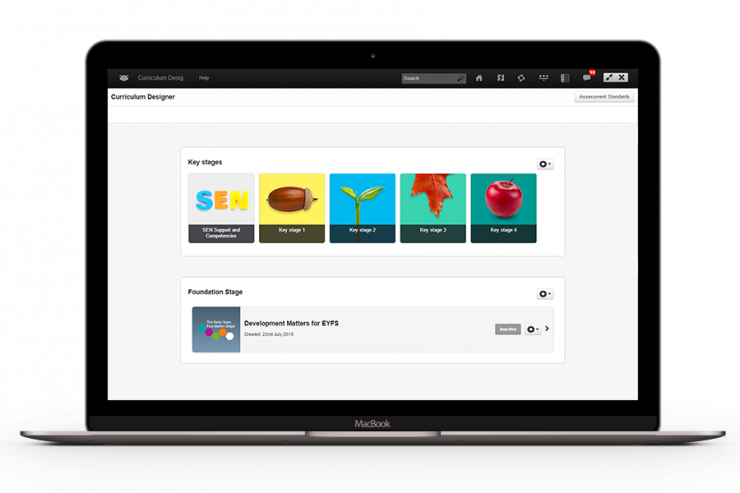

DIGITAL CURRICULUM DEVELOPMENT

DIRECTLY IMPACTS ON PUPIL PROGRESS

PROGRESSION REPORTS & TEACHING RESOURCES

CURRICULUMS & EXEMPLAR STANDARDS

PUBLISH YOUR CURRICULUM

Accurately measure student progress

FrogProgress is the leading choice for schools looking for a purposefully designed system to assess and measure progress for life after levels or mastery based curriculum.

Register to get access to the Frog Curriculum Designer platform...